Numerical Analysis | Vibepedia

Numerical analysis is the branch of mathematics dedicated to developing and analyzing algorithms that use numerical approximation to solve problems of…

Contents

Overview

Numerical analysis is the branch of mathematics dedicated to developing and analyzing algorithms that use numerical approximation to solve problems of continuous mathematics. Unlike symbolic computation, which manipulates exact expressions, numerical analysis deals with real or complex numbers, often requiring approximations due to the inherent complexity or impossibility of finding exact solutions. Its applications are ubiquitous, spanning physics, engineering, economics, biology, and even the arts, fueled by the exponential growth in computing power that allows for increasingly sophisticated and realistic mathematical models. From predicting celestial mechanics with ordinary differential equations to analyzing vast datasets via numerical linear algebra, and simulating intricate biological systems with stochastic differential equations, numerical analysis provides the computational engine for scientific discovery and technological advancement. Its foundational algorithms, though often invisible to the end-user, are the bedrock upon which much of modern computation and scientific understanding is built.

🎵 Origins & History

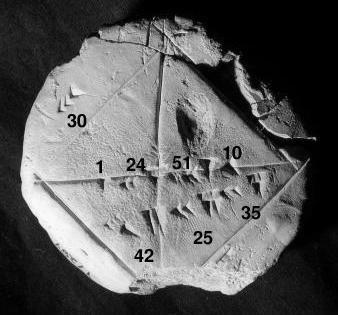

The roots of numerical analysis stretch back to antiquity. Early examples include methods for approximating square roots. Figures like Archimedes employed methods akin to numerical integration to calculate areas and volumes, notably approximating pi. The development of calculus by Isaac Newton and Gottfried Wilhelm Leibniz provided a powerful theoretical framework, but many problems remained analytically intractable. Figures like Leonhard Euler developed numerous numerical methods for solving differential equations and evaluating integrals, laying crucial groundwork. The 19th century saw significant advancements with mathematicians like Carl Friedrich Gauss, who developed methods for solving systems of linear equations and approximating roots of polynomials, and Augustin-Louis Cauchy, who contributed to the theory of convergence for iterative methods. The advent of electronic computers in the mid-20th century, however, truly revolutionized the field, transforming theoretical algorithms into practical tools capable of tackling problems of unprecedented scale and complexity.

⚙️ How It Works

At its heart, numerical analysis is about transforming complex mathematical problems into a sequence of simpler arithmetic operations that a computer can execute. This often involves discretizing continuous problems, such as approximating a curve with a series of line segments or an integral with a sum of areas. Key techniques include iterative methods, where an initial guess is refined repeatedly until it converges to a solution within a desired tolerance (e.g., Newton's method for root-finding). Error analysis is paramount; numerical analysts meticulously study sources of error, such as round-off error (due to finite precision arithmetic) and truncation error (from approximating infinite processes), to ensure the reliability and accuracy of computed results. The design and analysis of algorithms focus on efficiency (computational cost, often measured in floating-point operations) and stability (how errors propagate). Libraries like the Basic Linear Algebra Subprograms and Linear Algebra Package provide highly optimized implementations of fundamental numerical routines.

📊 Key Facts & Numbers

The global market for numerical simulation and modeling software, a direct application of numerical analysis, is projected to grow significantly. A double-precision floating-point number, used extensively in numerical computations, requires 64 bits of memory. Modern supercomputers can perform over 10^18 floating-point operations per second (exaflops), enabling simulations that were unimaginable just a decade ago. The condition number of a linear system can range from 1 (well-conditioned) to 10^16 or higher (ill-conditioned), indicating how sensitive the solution is to small changes in input data. The convergence rate of an algorithm can be linear, superlinear, or quadratic, with quadratic convergence (e.g., Newton's method) often halving the error in each iteration under ideal conditions. The FFT algorithm can reduce the complexity of computing a discrete Fourier transform from O(n^2) to O(n log n), a monumental speedup for signal processing applications.

👥 Key People & Organizations

Pioneers like John von Neumann were instrumental in developing early computational methods and understanding the theoretical underpinnings of computing machines essential for numerical analysis. James H. Wilkinson, a Turing Award laureate, made profound contributions to the numerical analysis of linear algebra, particularly eigenvalue problems. Cleve Moler, founder of MathWorks, developed MATLAB, a widely adopted environment for numerical computation and algorithm development. Organizations such as the SIAM and the ACM play crucial roles in fostering research, disseminating knowledge, and organizing conferences for numerical analysts. Leading research institutions like Stanford and MIT host departments and research groups dedicated to computational science and numerical methods, attracting top talent.

🌍 Cultural Impact & Influence

Numerical analysis is the silent engine behind countless technological marvels and scientific breakthroughs. It underpins the weather forecasting models that guide daily life, the computational fluid dynamics (CFD) simulations used to design aircraft and automobiles, and the finite element analysis (FEA) that ensures the structural integrity of bridges and buildings. In medicine, it enables the development of advanced imaging techniques like Magnetic Resonance Imaging and the simulation of drug interactions. The entertainment industry relies on numerical methods for realistic animation and special effects in films and video games. Financial modeling, from risk assessment to algorithmic trading, heavily depends on sophisticated numerical algorithms. The widespread availability of powerful computing resources, democratized by platforms like Google Colab and Jupyter, has further amplified the cultural reach and practical application of numerical analysis, making complex computations accessible to a broader audience.

⚡ Current State & Latest Developments

The field is currently experiencing rapid evolution driven by advancements in hardware and new algorithmic paradigms. The rise of machine learning and AI has introduced novel numerical challenges, particularly in training deep neural networks, which involve massive-scale optimization and stochastic gradient descent. The development of specialized hardware, such as GPUs and TPUs, necessitates the creation of highly parallelized numerical algorithms. Research into validated numerics (or rigorous computation) is gaining traction, aiming to provide mathematical guarantees of accuracy and reliability for computed results, moving beyond traditional error bounds. Furthermore, the increasing demand for real-time simulations in areas like autonomous driving and virtual reality pushes the boundaries of computational efficiency and algorithmic design. The integration of numerical analysis with symbolic computation is also an active area, seeking to combine the strengths of both approaches.

🤔 Controversies & Debates

One persistent debate revolves around the trade-off between accuracy and computational cost. While theoretically ideal algorithms might yield perfect solutions, practical implementations on finite-precision machines often introduce errors. Critics sometimes question the reliability of numerical results, especially in complex simulations where error propagation can be difficult to fully control. The choice of algorithm can significantly impact the outcome, leading to discussions about best practices and the potential for algorithmic bias. Another area of contention is the interpretability of results from complex, data-driven numerical models, particularly in fields like AI, where understanding why a particular solution is reached can be as important as the solution itself. The ethical implications of using numerical models for decision-making in sensitive areas like finance or healthcare also spark ongoing debate.

🔮 Future Outlook & Predictions

The future of numerical analysis is inextricably linked to the trajectory of computing power and the increasing complexity of the problems we seek to solve. We can anticipate further integration with AI, leading to adaptive algorithms that learn and optimize themselves in real-time. The development of quantum computing, while still nascent, holds the potential to revolutionize certain classes of numerical problems, particularly those involving linear algebra and optimization, with algorithms like Grover's algorithm offering potential speedups. The push for exascale and beyond computing

Key Facts

- Category

- science

- Type

- topic